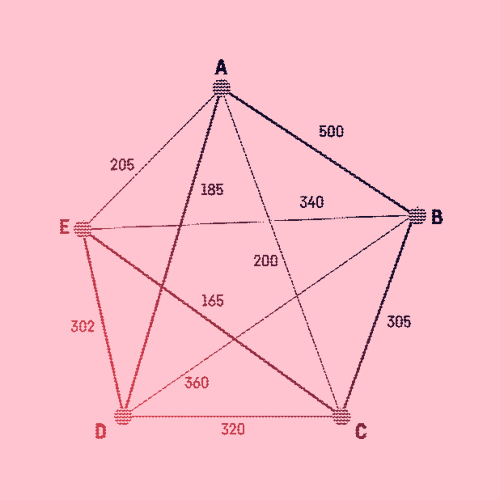

The K-Nearest Neighbors algorithm (or kNN) can be used to solve both classification and regression problems. Simple and easy-to-implement, this supervised machine learning algorithm is one of the most widely used, from genetics to Netflix recommendations.

The kNN algorithm classifies a data point according to its neighbors’ classification. It assumes that similar things exist in proximity, hinging on this assumption being true enough for the algorithm to be useful.

The origins of kNN can be found in research carried out for the U.S. armed forces. Evelyn Fix (1904–1965) was a mathematician and statistician who taught statistics at Berkeley. Joseph Lawson Hodges Jr. (1922–2000) was a fellow statistician at Berkeley who worked with the Twentieth Air Force of USAF (United States Air Forces) from 1944. Putting their brilliant minds together, they wrote a technical analysis report in 1951 for USAF. It introduced discriminant analysis, a non-parametric classification method. However, the paper was never officially published — most likely for confidentiality reasons in the wake of World War II.

Championing Classification

This is the first known introduction of non-parametric classification and would later become the famous kNN algorithm. In 1967, Thomas Cover and Peter Hart proved an upper bound error rate with multiclass kNN classifications. Since then, there have been many improvements and new approaches continue to emerge.

“Birds of a feather flock together.”

Today, the kNN algorithm is often used as a benchmark for more complex classifiers, like the Support Vector Machines (SVM) and the Artificial Neural Networks (ANN). Despite its simplicity, kNN fares better than many more powerful classifiers. It proves useful in areas ranging from genetics to data compression, and economic forecasting. The kNN algorithm is also used in video recognition, image recognition, handwriting detection, and speech recognition — as well as the customer reading or watching recommendations made by companies like Amazon and Netflix.

Key Dates

-

1951

Non-Parametric Pattern Classification

Non-parametric pattern classification — that would later become kNN — is first introduced in an unpublished technical report for the United States Air Force.

-

1967

Nearest Neighbor Pattern Classification

Thomas Cover and Peter Hart publish “Nearest Neighbor Pattern Classification,” which prove an upper bound error rate with multiclass kNN classification. This paper will pave the way for new rejection studies.

-

1985

Fuzzy kNN

James Keller develop a “fuzzy” version of the kNN algorithm. With a lower error rate, it compares well against more sophisticated pattern recognition procedures.