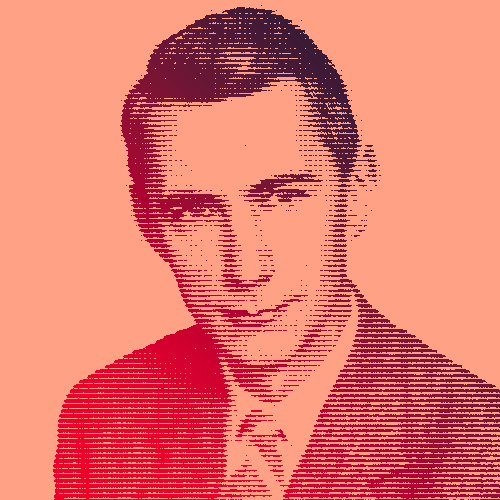

Dr. Claude Shannon’s creation of information theory made the digital world as we know it today possible. In addition to being a mathematician and computer scientist, Shannon is responsible for the concept of the “bit” (the basic unit of information), digital compression, and strategies for encoding and transmitting information seamlessly between two end points.

Shannon received bachelor’s degrees in electrical engineering and mathematics from the University of Michigan in 1936 and moved on to MIT for a master’s degree in electrical engineering and Ph.D. in mathematics, where he graduated in 1940. While at MIT, he worked with Dr. Vannevar Bush on “the differential analyzer,” a mechanical analog computer aimed to solve differential equations by integration.

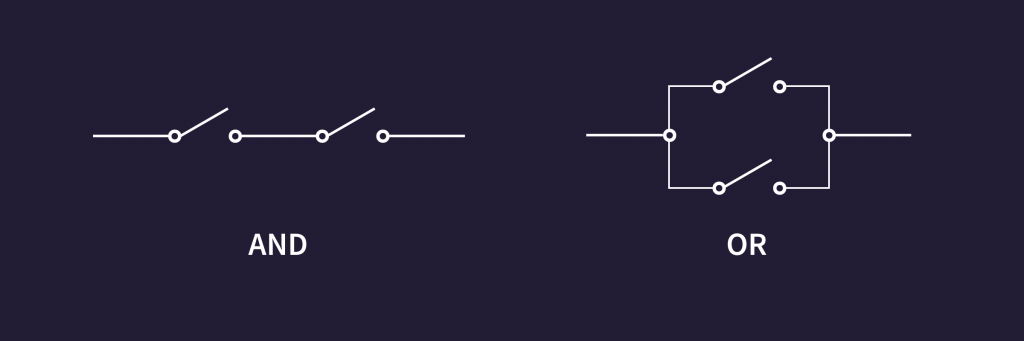

Shannon’s master’s thesis “A Symbolic Analysis of Relay and Switching Circuits” used Boolean algebra to establish the theory behind digital circuits — which are fundamental to the function of today’s computers and telecommunications equipment.

Ushering in the Information Age

In 1941, Shannon took a job in the mathematics department at American Telephone and Telegraph’s Bell Laboratories in New York City, where he contributed to work on anti-aircraft missile control systems and cryptography. His work on secret communication systems was used to build the same system used by Roosevelt and Churchill during World War II. His work laid the foundation for digital computing, showing how logical statements could be translated into 1’s and 0’s.

“Information is the resolution of uncertainty.”

Dr. Robert G. Gallagher, a professor of electrical engineering who worked with Shannon at MIT said, “Shannon was the person who saw that the binary digit was the fundamental element in all of communication. That was really his discovery, and from it the whole communications revolution has sprung.”

“My mind wanders around and I conceive of different things day and night. Like a science fiction writer, I’m thinking, what if it were like this?”

Shannon’s most important paper, “A Mathematical Theory of Communication,” defined a mathematical notion by which information could be quantified and highlighted how information could be delivered successfully over communication channels such as phone lines or wireless connections.

“I am very seldom interested in applications. I am more interested in the elegance of a problem. Is it a good problem, an interesting problem?”

In addition to his contributions to the science and mathematics communities, Shannon also enjoyed having fun — he could be found juggling while riding a unicycle down the halls of Bell Labs, working on chess-playing machines, or infusing humor and curiosity into his research.

“I visualize a time when we will be to robots what dogs are to humans. And I am rooting for the machines.”

In 1973, Shannon received the Shannon Award, named in his honor and given by the Information Theory Society of the Institute of Electrical and Electronics Engineers (IEEE). It remains the highest possible honor in the community of researchers dedicated to advancing the field he created.

Key Dates

-

1940

Claude Shannon published his master’s thesis

Claude Shannon publishes his master’s thesis, “A Symbolic Analysis of Relay and Switching Circuits”

-

1941

Claude Shannon joined the mathematics department

Claude Shannon joins the mathematics department at Bell Labs.

-

1948

Claude Shannon’s most important paper

Claude Shannon’s most important paper, “A Mathematical Theory of Communication,” is published.